For example, our standard file format for C/C++ source code includes a grammar that recognizes keywords, comments, and literal strings. Our code, pretrained models, and hyperparameters are available in both Tensorflow and PyTorch. Beyond Compare Grammars A file format specification can include a grammar definition, used for syntax highlighting, and to help define which differences are important. When trained only on WikiText-103, Transformer-XL manages to generate reasonably coherent, novel text articles with thousands of tokens. Notably, we improve the state-of-the-art results of bpc/perplexity to 0.99 on enwiki8, 1.08 on text8, 18.3 on WikiText-103, 21.8 on One Billion Word, and 54.5 on Penn Treebank (without finetuning). As a result, Transformer-XL learns dependency that is 80% longer than RNNs and 450% longer than vanilla Transformers, achieves better performance on both short and long sequences, and is up to 1,800+ times faster than vanilla Transformers during evaluation. Our method not only enables capturing longer-term dependency, but also resolves the context fragmentation problem. and pronunciations may not compare to Markle occasionally pushing a vowel sound. It consists of a segment-level recurrence mechanism and a novel positional encoding scheme. Markles not the only one whose changing speech patterns have come under.

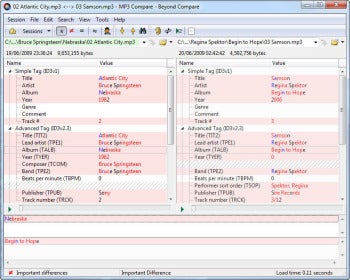

We propose a novel neural architecture Transformer-XL that enables learning dependency beyond a fixed length without disrupting temporal coherence. What do you like best about Beyond Compare With the help of this tool - we can easily compare the files, and folders & change them based on the comparison. Transformers have a potential of learning longer-term dependency, but are limited by a fixed-length context in the setting of language modeling.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed